0x323 File System

- 1. Device

- 2. File System

- 4. POSIX

- 5. Windows

- 6. Reference

1. Device

Device can be divided into two groups:

- character device: no buffering is performed

- block device: there is a buffer cache, block devices must be random access

1.1. Device Driver

Cat /proc/devices can show all loaded device drivers in Linux.

2. File System

Overview of major file systems

Disk FS

- UNIX: FS, FFS

- Linux: ext2, ext3, ext4

- Windows: FAT12,16,32, exFAT, NTFS

- Apple: HFS, HFS+, APFS

- Other: ZFS, XFS

NAS: AFS, HDFS (Hadoop), GFS

Special: tmpfs, procfs...

The minimum element of disk available (from the OS perspective, it might be 512 byte sector from the physical view) is called block, which is usually 4KB (which is also the reason why empty dir size is 4KB)

Superblock contains the metadata of the disk and file system. In Linux, we can use the command dumpe2fs to see metadata details stored in the superblock. It contains information such as total inode count and total block count.

2.1. Very Simple File System

This section summarizes the vsfs (very simple file system) introduced in the chapter 40 of OSTEP

inode is the on-disk structures of a file system to store the metadata of files in unix like os. The inode info can be retrieved with stat(2). It is short for index node because originally they are stored in an array and indexed into when accessing a particular inode.

Note that Windows file systems do not have inode, they store metadata in the directory data structure (directory entry).

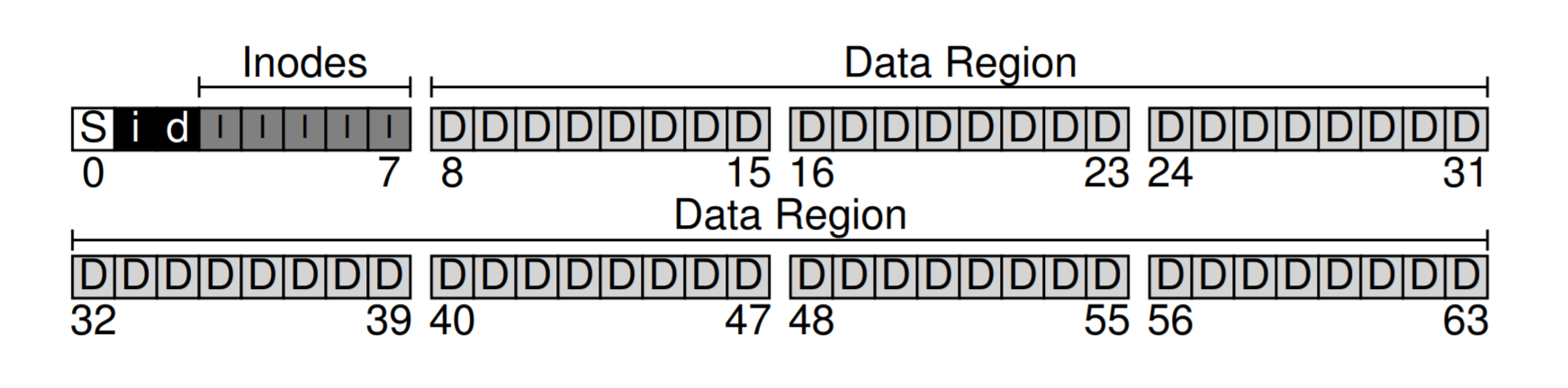

The VSFS organizes data structure as follows:

- S: superblock

- i: inode bitmap

- d: data bitmap

- I: inodes

- D: data

Each inode is given an number (i-number), in this fs, the i-number can be used to calculate where the inode is located in the disk.

Size of inode is usually 256 bytes.

How to manage large file block pointers ?

2.1.1. Data Block Management

One important decision is how the inode referes to where data blocks are:

- direct pointers: store limited disk pointers inside the inode directly.

- indirect pointers have a special pointer known as an indirect pointer, which pointing to a block that contains more pointers.

- multi-level index: extend the indirector pointer into multi-level. This is used in ext2,3.

- extents: store the start and end block for a contiguous ranges. This is used in ext4 and XFS.

- linked list: inside inode have a pointer pointing to the first block. Each block has a point to the next block. This is used in FAT

2.1.2. Directory

Directory also has an inode with the type field marked as directory. The directory has data blocks containing entry info such as i-number, file name and its length. Note that the entries do not need to be stored as a simple linear list, it can be B-tree like structures in XFS and ext4. linear structure is not very practical in terms of large number of files.

2.2. Journaling

A power loss or system crash might leave the system in an inconsistent state, this is called the crash consistency problem.

To overcome the inconsistency, one simple solution is to use fsck (file system checker), which repairs the file system before the it is mounted, but it is very slow as it scanns the entire disk.

A more common approach is to use Journaling or write-ahead logging. The basic idea is to write down a little note describing what you are about to do before updating the strucutres in place.

2.3. ext

ext4 features:

- use HTree (simplified B-tree: no rebalancing, constant depth B-tree) to organize file in directory

To create a ext4 file system on Linux

mkfs.ext4 /dev/sdb1

4. POSIX

4.1. File

4.1.1. File Attributes

API (stat, lstat, fstat (2))

- stat: return metadata (inode) about a file. require exec permission for all parent paths

- lstat: similar to stat, return info of a link itself if link specified

- fstat: info about a file descriptor

for example, ls uses this API to retrieve timestamps.

Notice the filename is not stored in inode here (it is stored in dirent instead)

// defined in sys/stat.h

struct stat {

mode_t st_mode;

ino_t st_ino;

dev_t st_dev;

dev_t st_rdev;

nlink_t st_nlink;

uid_t st_uid;

gid_t st_gid;

off_t st_size;

struct timespec st_atim;

struct timespec st_mtim;

struct timespec st_ctim;

blksize_t st_blksize;

blkcnt_t st_blocks;

};

atime

Note that atime (st_aim) denotes the access time, it was critisized a lot because it issues a write instruction to disk during reading. A new default option relatime was introduced in 2009 and this atime is no longer strictly means access time anymore. Its configuration is available in fstab

4.1.2. File IO

According to POSIX, I/O is intended to be atomic to ordinary files and pipes and FIFOs

syscall (open (2))

- O_RDONLY, O_WRONLY, O_RDWR: file access mode

- O_CREAT: create the file if not existed

- O_DIRECT: bypass OS page cache

- O_EXCL: use together with O_CREAT to prevent race condition of creation. Only one process will succeed when creating, others will fail.

- O_APPEND: append write is atomic for most file systems (e.g: NFS, HDFS are not).

syscall (pread/pwrite (2)) - read and write at a specific offset without modifying current offset in fd. - It is equivalent to atomically perform: lseek to the new offset, io, lseek to the original offset. useful for multithread applications.

syscall (readv/writev (2)) scatter/gather IO

- readv read from fd into multiple buffers atomically

- writev write from multiple buffers into fd atomically

syscall (fcntl (2)) perform control operations on an open file descriptor

syscall (rename (2))

rename a file, the underlying syscall of mv(1), this is an atomic call wrt system crash: the file is either after or before the rename. No mixing state will be allowed.

syscall (unlink (2))

delete a file, the underlying syscall of rm(1)

4.2. Directory

syscall (readdir (3)) read the entry for a directory

Notice this is section 3, not 2. readdir(2) defines a old syscall which is now depreciated.

glibc defines the dirent as follows:

struct dirent {

ino_t d_ino; /* Inode number */

off_t d_off; /* Not an offset; see below */

unsigned short d_reclen; /* Length of this record */

unsigned char d_type; /* Type of file; not supported

by all filesystem types */

char d_name[256]; /* Null-terminated filename */

};

Notice the name of an entry is upper bound by 255 (+1 null).

To get further info about this entry, use stat (which is exactly what ls -l is doing)

The dirent might pointing to a static memory, so we should not call free (3)

4.3. IO Multiplexing

Traditional IO are mostly blocking (e.g: read will go to sleep if the required data is not in the buffer cache), some exceptions are writing to the disk files, where the write return as soon as the requested data has been transfered to the kernel buffer.

So we need a way to monitor file descriptor whether it is availble iwthout blocking.

4.3.1. select

API (select(2)) synchronous multiplexing I/O

- blocking until some file descriptors are available to do I/O, otherwise timeout can be specified

- fd_set will be modified to only include available IO ready files

- set can be manipulated or queried with

FD_ZERO,FD_SET,FD_ISSET

int select(int nfds, fd_set *readfds, fd_set *writefds, fd_set *errorfds, struct timeval *timeout);

4.3.2. poll

API (poll(2)) similar to select, it waits for one of a set of file descriptors to become ready to perform I/O.

int poll (struct pollfd *fds, unsigned int nfds, int timeout);

pollfd is defined as follows. events is a bit mask specifying the event we want to monitor (e.g: POLLIN for read, POLLOUT for write)

struct pollfd {

int fd; /* file descriptor */

short events; /* requested events */

short revents; /* returned events */

};

4.3.3. epoll (linux)

from Kernel 2.6

Here is a blog about the importance of epoll

epoll has better performance: O(1) (vs O(n) in select/poll) improves poll by creating context in the kernel, so no need to build the sets and send everytime

epoll_createcreate contextepoll_ctl: add/remove file descriptorsepoll_wait: wait for events

epoll is linux-specific,

4.3.4. kqueue (FreeBSD)

4.3.5. Event Ports (Solaris)

4.3.6. IOCP (Windows)

4.4. Asynchronous IO

4.4.1. aio (posix)

allows applications to initiate one or more I/O operations that are performed asynchronously (i.e., in the background).

The application can elect to be notified of completion of the I/O operation in a variety of ways: by delivery of a signal, by instantiation of a thread, or no notification at all.

aio_read: enqueue a read request, analogous toread

4.4.2. io_uring

4.5. File Monitoring

inotify API is added to replace dnotify from kernel 2.6.

- inotify_init: create an inotify instance

- inotify_add_watch: add items to the watch lists read from the inotify file descriptor to retrieve inotify_event structs

4.6. Other IO

io_uring

4.7. Commands

lsof: list open files

lsblk: lists information about all available or the specified block devices.

5. Windows

File related API is defined at fileapi.h

5.1. File

win32 (CreateFileW) create or open a file or IO device

HANDLE CreateFileW(

LPCWSTR lpFileName,

DWORD dwDesiredAccess,

DWORD dwShareMode,

LPSECURITY_ATTRIBUTES lpSecurityAttributes,

DWORD dwCreationDisposition,

DWORD dwFlagsAndAttributes,

HANDLE hTemplateFile

);

each file can be stat with GetFileInformationByHandle

win32 (GetFileInformationByHandle) retrieve info for the specified file (like stat)

return value is the follows:

typedef struct _BY_HANDLE_FILE_INFORMATION {

DWORD dwFileAttributes;

FILETIME ftCreationTime;

FILETIME ftLastAccessTime;

FILETIME ftLastWriteTime;

DWORD dwVolumeSerialNumber;

DWORD nFileSizeHigh;

DWORD nFileSizeLow;

DWORD nNumberOfLinks;

DWORD nFileIndexHigh; // this high and low are volume uniqueID like inode number

DWORD nFileIndexLow;

} BY_HANDLE_FILE_INFORMATION, *PBY_HANDLE_FILE_INFORMATION, *LPBY_HANDLE_FILE_INFORMATION;

5.2. Directory

win32 (CreateDirectoryW) create a new directory with path

BOOL CreateDirectoryW(

LPCWSTR lpPathName,

LPSECURITY_ATTRIBUTES lpSecurityAttributes

);

6. Reference

[1] Operating Systems: Three Easy Pieces

[2] Kerrisk, Michael. The Linux programming interface: a Linux and UNIX system programming handbook. No Starch Press, 2010.